Ady Stokes

Freelance Consultant

He / Him

I am Open to Write, Teach, Speak, Meet at MoTaCon 2026, Podcasting, Review Conference Proposals

STEC and SQEC Certified. MoT Ambassador, writer, speaker, accessibility advocate. Consulting, Leeds Chapter Lead. MoT Certs curator. Testing wisdom, friendly, songs and poems. Great minds think differently

Achievements

Certificates

Awarded for:

Passing the exam with a score of 100%

Awarded for:

Passing the exam with a score of 100%

Activity

earned:

1.5.0 of Quality Coaching essentials

earned:

8.0.0 of MoT Software Quality Engineering Certificate

earned:

1.0.0 of Quality Coaching essentials

earned:

7.0.0 of MoT Software Quality Engineering Certificate

earned:

6.10.0 of MoT Software Quality Engineering Certificate

Contributions

Today, it's mostly a bad idea.

Why it no longer works well

Modern ATS systems don't just count keywords. They:

Parse the structure of the CV.

Look for keywords in context. ...

World Cup ducks

Quark quark

Vibe coding, agentic engineering or coding, conversational programming, and others are terms for using AI (Artificial Intelligence, not actual intelligence) to create code, products and systems. Th...

Judy and Clare trade spicy hot takes, from notification overload and 'done > perfect' learning, to a passionate deep dive on accessibility as a moral (and business) imperative.

Software development is inherently discriminatory because we know about digital accessibility and still don't do it. We have the knowledge, the skills, and the moral imperative to include all ...

A lot of my week has been doing things in preparation for a family party for my 60th birthday. A good win was finding party food catering from the supermarket I normally get deliveries from. So the...

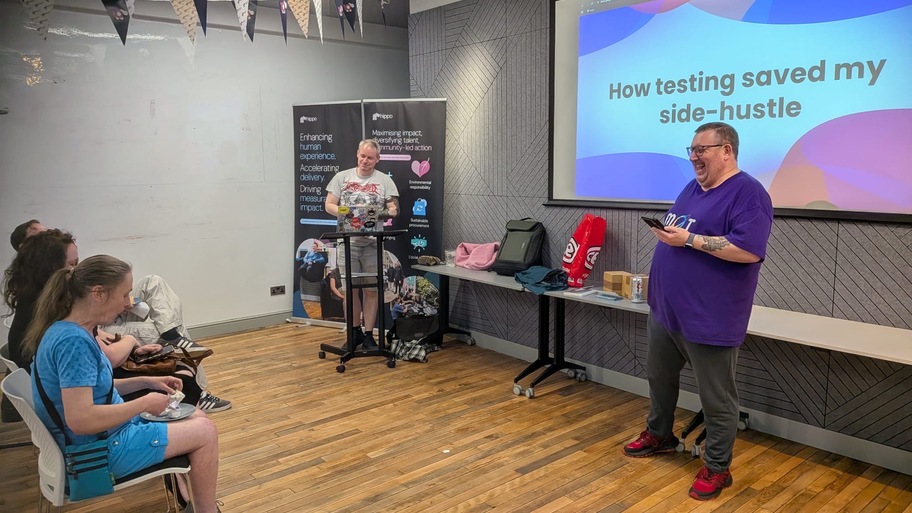

I always enjoy meet-ups and hosting them. Here I am introducing Colin, getting distracted by Scott and telling the audience corny jokes

Good start. Apparently I’m on the wrong train. It arrived at the right time, no tannoy, no words on the side. Set off said it was the Bradford one. Looked at app, Leeds is 11 minutes delayed. I’ll ...